Diffusion Models Are Real-Time Game Engines/zh: Difference between revisions

FelipeArias (talk | contribs) (Created page with "一个实时运行的神经模型是否能够以高质量模拟复杂的游戏?") |

FelipeArias (talk | contribs) (Created page with "==== 3.2.1 使用噪声增强缓解自回归漂移 ====") |

||

| Line 86: | Line 86: | ||

在推理过程中,我们需要运行 U-Net 去噪器(进行若干步)和自动编码器。在我们的硬件配置(TPU-v5)下,一次去噪步骤和自动编码器的评估各需 10 毫秒。如果我们以单步去噪器运行模型,设置中的最小总延迟为每帧 20 毫秒,即每秒 50 帧。通常情况下,生成扩散模型(如 Stable Diffusion)通过单次去噪步骤无法产生高质量结果,而是需要数十个采样步骤才能生成高质量图像。令人惊讶的是,我们发现只需 4 个 DDIM 采样步骤,就能稳健地模拟 DOOM(Song 等人,[https://arxiv.org/html/2408.14837v1#bib.bib33 2020])。实际上,我们观察到使用 4 步采样与使用 20 步或更多步采样相比,模拟质量没有下降(见附录 [https://arxiv.org/html/2408.14837v1#A1.SS4 A.4])。 | 在推理过程中,我们需要运行 U-Net 去噪器(进行若干步)和自动编码器。在我们的硬件配置(TPU-v5)下,一次去噪步骤和自动编码器的评估各需 10 毫秒。如果我们以单步去噪器运行模型,设置中的最小总延迟为每帧 20 毫秒,即每秒 50 帧。通常情况下,生成扩散模型(如 Stable Diffusion)通过单次去噪步骤无法产生高质量结果,而是需要数十个采样步骤才能生成高质量图像。令人惊讶的是,我们发现只需 4 个 DDIM 采样步骤,就能稳健地模拟 DOOM(Song 等人,[https://arxiv.org/html/2408.14837v1#bib.bib33 2020])。实际上,我们观察到使用 4 步采样与使用 20 步或更多步采样相比,模拟质量没有下降(见附录 [https://arxiv.org/html/2408.14837v1#A1.SS4 A.4])。 | ||

仅使用 4 个去噪步骤导致 U-Net 总耗时为 40 毫秒(包括自动编码器的推理总耗时为 50 毫秒),即每秒 20 帧。我们推测,在我们的案例中,较少步骤对质量影响可忽略不计,是由于以下因素的结合:(1) 受限的图像空间,以及 (2) 前一帧的强条件作用。 | |||

<div lang="en" dir="ltr" class="mw-content-ltr"> | <div lang="en" dir="ltr" class="mw-content-ltr"> | ||

Revision as of 00:24, 9 September 2024

作者: Dani Valevski(谷歌研究)、Yaniv Leviathan(谷歌研究)、Moab Arar(特拉维夫大学)、Shlomi Fruchter(谷歌 DeepMind)

ArXiv链接: https://arxiv.org/abs/2408.14837

项目网站: https://gamengen.github.io

摘要

我们介绍了GameNGen,这是第一个完全由神经模型驱动的游戏引擎,能够在长轨迹上与复杂环境进行高质量的实时交互。GameNGen 可以在单个 TPU 上以每秒超过 20 帧的速度交互模拟经典游戏 DOOM。下一帧预测的 PSNR 为 29.4,与有损 JPEG 压缩相当。在区分游戏短片和模拟片段方面,人类评分员的表现仅略好于随机概率。GameNGen 的训练分为两个阶段:(1) 一个强化学习代理学习玩游戏,并记录训练过程;(2) 训练一个扩散模型,以过去的帧和动作序列为条件生成下一帧。条件增强技术可在长轨迹上实现稳定的自动回归生成。

请参见 https://gamengen.github.io 获取演示视频。

1 介绍

计算机游戏是围绕以下“游戏循环”手动制作的软件系统:(1) 收集用户输入,(2) 更新游戏状态,(3) 将其渲染为屏幕像素。这个游戏循环以很高的帧率运行,为玩家营造出一个交互式虚拟世界的假象。这种游戏循环通常在标准计算机上运行,尽管也有许多在定制硬件上运行游戏的惊人尝试(例如,标志性游戏《毁灭战士》曾在烤面包机、微波炉、跑步机、照相机、iPod 上运行,甚至在 Minecraft 游戏中运行——仅举几例,请参见 https://www.reddit.com/r/itrunsdoom/),但在所有这些情况下,硬件仍然是直接模拟手动编写的游戏软件。此外,尽管游戏引擎千差万别,但所有引擎中的游戏状态更新和渲染逻辑都是由一套手动编程或配置的规则组成的。

近年来,生成模型在根据文本或图像等多模态输入生成图像和视频方面取得了重大进展。在这一浪潮的前沿,扩散模型成为非语言媒体生成的事实标准,如 Dall-E(Ramesh 等人,2022)、Stable Diffusion(Rombach 等人,2022)和 Sora(Brooks 等人,2024)。乍一看,模拟视频游戏的交互世界似乎与视频生成类似。然而,"交互式"世界模拟不仅仅是快速生成视频。因为生成过程中需要以输入动作流为条件,而输入动作流只能在生成时获取,这打破了现有扩散模型架构的一些假设。尤其是,它要求自回归地生成帧,这往往是不稳定的,并导致采样发散(见 3.2.1 节)。

有几项重要研究(Ha & Schmidhuber,2018;Kim 等人,2020;Bruce 等人,2024)(见第6节)使用神经模型来模拟交互式视频游戏。然而,这些方法大多在模拟游戏的复杂性、仿真速度、长时间的稳定性或视觉质量等方面存在局限性(见图2)。因此,自然而然地会问:

一个实时运行的神经模型是否能够以高质量模拟复杂的游戏?

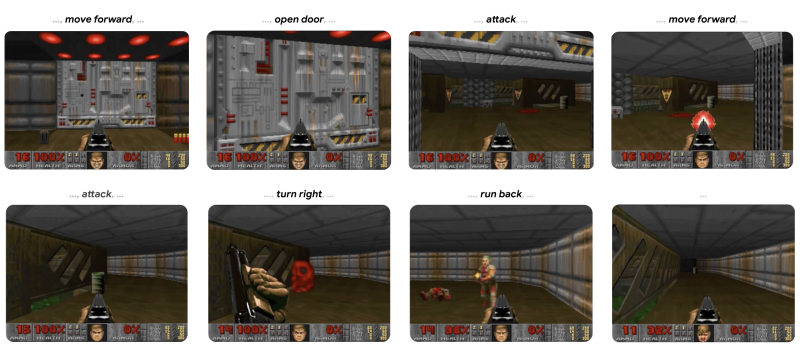

在这项工作中,我们证明答案是肯定的。具体来说,我们展示了一款复杂的视频游戏——标志性游戏《DOOM》,可以在神经网络(开放式 Stable Diffusion v1.4 的增强版(Rombach 等人,2022))上实时运行,同时获得与原始游戏相当的视觉质量。尽管这不是精确仿真,该神经模型能够执行复杂的游戏状态更新,例如统计生命值和弹药、攻击敌人、破坏物体、开门,以及在长轨迹上持续保持游戏状态。

GameNGen 回答了在通往游戏引擎新范式的道路上一个重要的问题,即游戏可以自动生成,就像近年来神经模型生成图像和视频一样。仍然存在关键问题,例如如何训练这些神经游戏引擎,以及如何有效地创建游戏,包括如何最佳地利用人类输入。尽管如此,我们对这种新范式的可能性感到非常兴奋。

2 互动世界仿真

一个交互环境由一个潜在状态空间、一个潜在空间的部分投影空间、一个部分投影函数、一组动作,以及一个转移概率函数,使得。

例如,在游戏 DOOM 中, 是程序的动态内存内容, 是渲染的屏幕像素, 是游戏的渲染逻辑, 是按键和鼠标移动的集合,而 是基于玩家输入的程序逻辑(包括任何潜在的非确定性)。

给定输入交互环境 和初始状态 ,一个“交互世界模拟”是一个“模拟分布函数” 。给定观测值之间的距离度量 ,一个“策略”,即给定过去动作和观测的代理动作分布 ,初始状态分布 和回合长度分布 ,交互世界模拟的目标是最小化 ,其中 ,,以及 是在执行代理策略 时从环境和模拟中抽取的观测值。重要的是,这些样本的条件动作总是通过代理与环境 交互获得,而条件观测既可以从 获得(“教师强迫目标”),也可以从模拟中获得(“自回归目标”)。

我们总是使用教师强迫目标来训练我们的生成模型。给定一个模拟分布函数 ,可以通过自回归地采样观测值来模拟环境 。

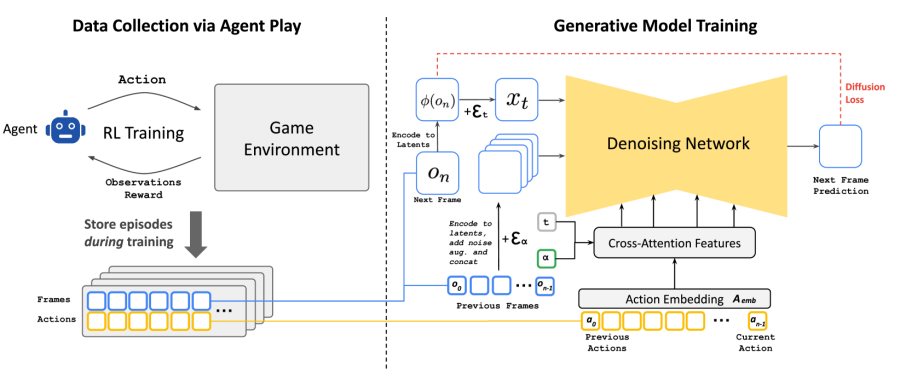

3 GameNGen

GameNGen(发音为“游戏引擎”)是一个生成扩散模型,它能够在第2节的设置下学习模拟游戏。为了收集该模型的训练数据,我们首先使用教师强制目标训练一个独立的模型与环境进行交互。这两个模型(代理和生成模型)依次进行训练。在训练过程中,代理的全部行为和观察语料 被保留下来,并在第二阶段成为生成模型的训练数据集。见图 3。

3.1 通过代理进行数据收集

我们的最终目标是让人类玩家与我们的仿真进行互动。为此,第2节中的策略即为“人类游戏策略”。由于我们无法直接大规模地从中取样,因此我们首先通过教一个自动代理来玩游戏,以此来近似人类游戏。与典型的强化学习设置不同,该设置旨在最大化游戏得分,我们的目标是生成与人类游戏类似的训练数据,或者至少在各种场景下包含足够多的多样化示例,以最大化训练数据的效率。为此,我们设计了一个简单的奖励函数,这是我们的方法中唯一与环境相关的部分(见附录A.3)。

我们在整个训练过程中记录了代理的训练轨迹,其中涵盖了不同技能水平的游戏。这组记录的轨迹构成了我们的数据集,用于训练生成模型(见第3.2节)。

3.2 训练生成扩散模型

现在,我们训练一个生成扩散模型,该模型以在前一阶段收集的代理轨迹(行动和观察)作为条件。

我们重新利用预训练的文本到图像扩散模型 Stable Diffusion v1.4(Rombach 等人,2022)。我们将模型 置于轨迹 的条件下,即在之前的动作 和观察(帧) 的序列条件下,并移除所有文本条件。具体来说,为了以动作为条件,我们仅需学习将每个动作(例如按下特定按键)嵌入为单个标记的 ,并将文本的交叉注意力替换为该编码动作序列。为了对观察(即之前的帧)进行条件化,我们使用自动编码器 将它们编码到潜在空间中,并在潜在通道维度中将它们串联到噪声潜在空间中(见图 3)。我们还尝试通过交叉注意力对这些过去的观察进行条件化,但没有观察到有意义的改进。

我们通过速度参数化训练模型,使得扩散损失最小化(Salimans & Ho, 2022b):

Failed to parse (syntax error): {\displaystyle \mathcal{L} = {{\mathbb{E}}_{t,\epsilon,T}\left\lbrack {\|{v{(\epsilon,x_{0},t)}} - {v_{\theta^{\prime}}{(x_{t},t,\{{\phi{(o_{i < n})}}\},\{{A_{emb}{(a_{i < n})}}\}})}}\|}_{2}^{2} \right\rbrack}} (1)

其中 Failed to parse (syntax error): {\displaystyle T = {\{ o_{i \leq n},a_{i \leq n}\}} \sim \mathcal{T}_{代理}} ,,,,,,而 是模型 的 v预测输出。噪声调度 是线性的,与 Rombach 等(2022)类似。

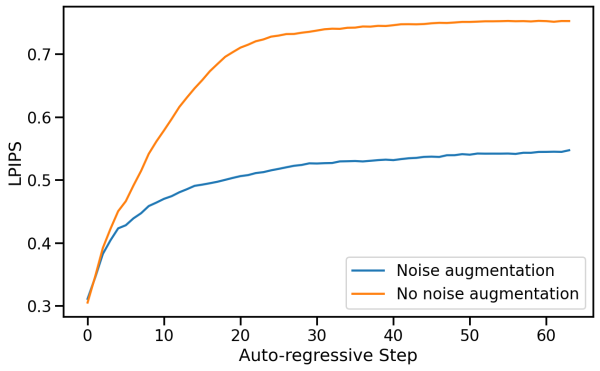

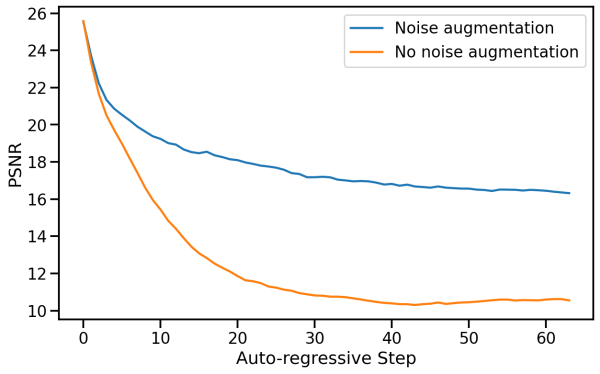

3.2.1 使用噪声增强缓解自回归漂移

如图4所示,教师强制训练和自动回归采样之间的领域偏移会导致误差积累和采样质量的快速下降。为了避免由于模型的自动回归应用而导致的这种偏差,我们在训练时向编码帧中添加不同程度的高斯噪声来扰动背景帧,并将噪声水平作为输入提供给模型,仿效 Ho 等人(2021)的方法。为此,我们对噪声水平 进行均匀采样,直至最大值,然后对其进行离散化,并为每个区间学习一个嵌入(见图3)。这使得网络能够纠正前几帧中的采样信息,对于长期保持帧质量至关重要。在推理过程中,可以控制添加的噪声水平以最大化质量,尽管我们发现,即使不添加噪声,结果也显著改善。我们将在5.2.2部分分析这种方法的影响。

3.2.2 潜在变量解码器微调

Stable Diffusion v1.4 的预训练自动编码器将 8x8 像素块压缩为 4 个潜通道,在预测游戏帧时会导致有意义的伪影,影响小细节,尤其是底栏 HUD(“抬头显示”)。为了在提高图像质量的同时利用预训练的知识,我们仅使用针对目标帧像素计算的 MSE 损失来训练潜在自动编码器的解码器。使用 LPIPS(Zhang 等人(2018))等感知损失可能会进一步提高质量,我们将其留待未来工作中研究。重要的是,请注意这个微调过程完全独立于 U-Net 微调过程,而且自回归生成不受其影响(我们仅对潜变量自回归地进行条件设置,而非像素)。附录 A.2 展示了对自动编码器进行微调和不进行微调的生成示例。

3.3 推理

3.3.1 设置

我们使用DDIM采样(Song等人,2022)。我们仅对过去观测条件采用了无分类器指导(Ho & Salimans,2022)。我们发现对过去动作条件的指导无法提高质量。我们使用的权重相对较小(1.5),因为较大的权重会产生伪影,而我们的自动回归采样则会放大这些伪影。

我们还尝试了同时生成 4 个样本并合并结果,希望能防止罕见的极端预测被采纳,并减少误差累积。我们尝试了对样本进行平均和选择最接近中位数的样本。平均效果略逊于单帧,而选择最接近中位数的样本效果仅略有提升。由于这两种方法都会将硬件需求提高到 4 个张量处理单元(TPU),因此我们决定不使用这些方法,但注意到这可能是未来研究的一个有趣领域。

3.3.2 去噪器采样步骤

在推理过程中,我们需要运行 U-Net 去噪器(进行若干步)和自动编码器。在我们的硬件配置(TPU-v5)下,一次去噪步骤和自动编码器的评估各需 10 毫秒。如果我们以单步去噪器运行模型,设置中的最小总延迟为每帧 20 毫秒,即每秒 50 帧。通常情况下,生成扩散模型(如 Stable Diffusion)通过单次去噪步骤无法产生高质量结果,而是需要数十个采样步骤才能生成高质量图像。令人惊讶的是,我们发现只需 4 个 DDIM 采样步骤,就能稳健地模拟 DOOM(Song 等人,2020)。实际上,我们观察到使用 4 步采样与使用 20 步或更多步采样相比,模拟质量没有下降(见附录 A.4)。

仅使用 4 个去噪步骤导致 U-Net 总耗时为 40 毫秒(包括自动编码器的推理总耗时为 50 毫秒),即每秒 20 帧。我们推测,在我们的案例中,较少步骤对质量影响可忽略不计,是由于以下因素的结合:(1) 受限的图像空间,以及 (2) 前一帧的强条件作用。

Since we do observe degradation when using just a single sampling step, we also experimented with model distillation similarly to (Yin et al., 2024; Wang et al., 2023) in the single-step setting. Distillation does help substantially there (allowing us to reach 50 FPS as above), but still comes at some cost to simulation quality, so we opt to use the 4-step version without distillation for our method (see Appendix A.4). This is an interesting area for further research.

We note that it is trivial to further increase the image generation rate substantially by parallelizing the generation of several frames on additional hardware, similarly to NVidia’s classic SLI Alternate Frame Rendering (AFR) technique. Similarly to AFR, the actual simulation rate would not increase and input lag would not reduce.

4 Experimental Setup

4.1 Agent Training

The agent model is trained using PPO (Schulman et al., 2017), with a simple CNN as the feature network, following Mnih et al. (2015). It is trained on CPU using the Stable Baselines 3 infrastructure (Raffin et al., 2021). The agent is provided with downscaled versions of the frame images and in-game map, each at resolution 160x120. The agent also has access to the last 32 actions it performed. The feature network computes a representation of size 512 for each image. PPO’s actor and critic are 2-layer MLP heads on top of a concatenation of the outputs of the image feature network and the sequence of past actions. We train the agent to play the game using the Vizdoom environment (Wydmuch et al., 2019). We run 8 games in parallel, each with a replay buffer size of 512, a discount factor , and an entropy coefficient of . In each iteration, the network is trained using a batch size of 64 for 10 epochs, with a learning rate of 1e-4. We perform a total of 10M environment steps.

4.2 Generative Model Training

We train all simulation models from a pretrained checkpoint of Stable Diffusion 1.4, unfreezing all U-Net parameters. We use a batch size of 128 and a constant learning rate of 2e-5, with the Adafactor optimizer without weight decay (Shazeer & Stern, 2018) and gradient clipping of 1.0. We change the diffusion loss parameterization to be v-prediction (Salimans & Ho 2022a). The context frames condition is dropped with probability 0.1 to allow CFG during inference. We train using 128 TPU-v5e devices with data parallelization. Unless noted otherwise, all results in the paper are after 700,000 training steps. For noise augmentation (Section 3.2.1), we use a maximal noise level of 0.7, with 10 embedding buckets. We use a batch size of 2,048 for optimizing the latent decoder; other training parameters are identical to those of the denoiser. For training data, we use all trajectories played by the agent during RL training as well as evaluation data during training, unless mentioned otherwise. Overall, we generate 900M frames for training. All image frames (during training, inference, and conditioning) are at a resolution of 320x240 padded to 320x256. We use a context length of 64 (i.e., the model is provided its own last 64 predictions as well as the last 64 actions).

5 Results

5.1 Simulation Quality

Overall, our method achieves a simulation quality comparable to the original game over long trajectories in terms of image quality. For short trajectories, human raters are only slightly better than random chance at distinguishing between clips of the simulation and the actual game.

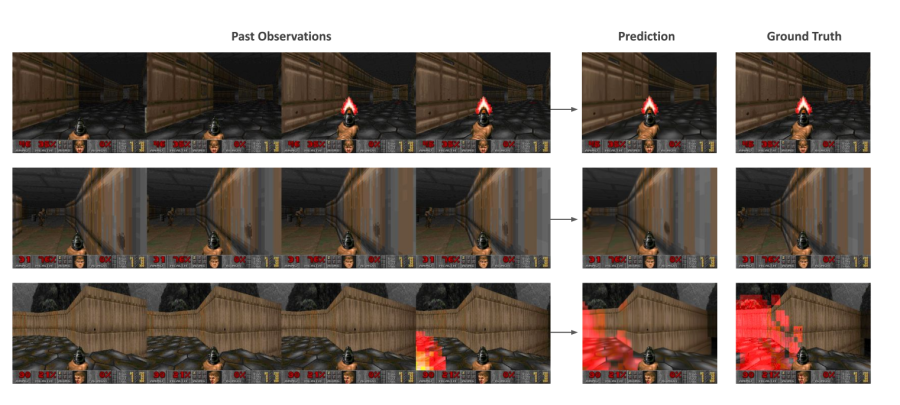

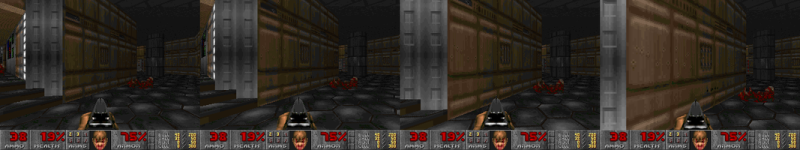

Image Quality. We measure LPIPS (Zhang et al., 2018) and PSNR using the teacher-forcing setup described in Section 2, where we sample an initial state and predict a single frame based on a trajectory of ground-truth past observations. When evaluated over a random holdout of 2048 trajectories taken in 5 different levels, our model achieves a PSNR of and an LPIPS of . The PSNR value is similar to lossy JPEG compression with quality settings of 20-30 (Petric & Milinkovic, 2018). Figure 5 shows examples of model predictions and the corresponding ground truth samples.

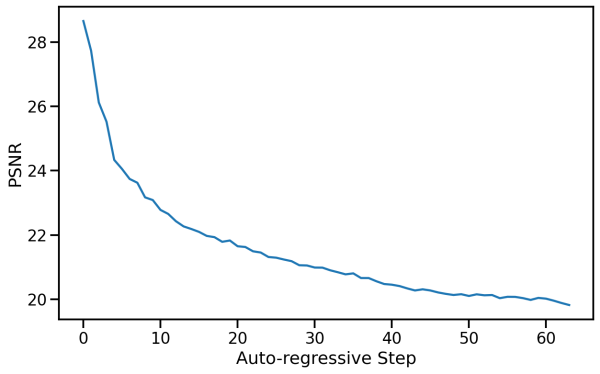

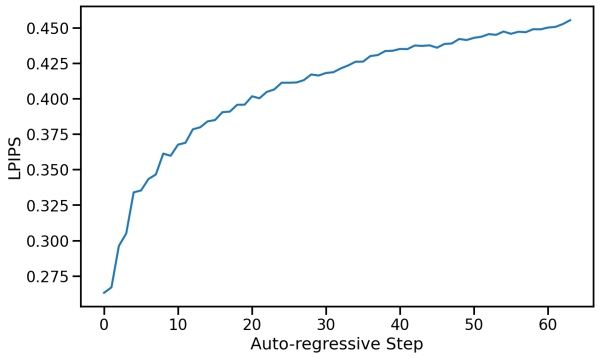

Video Quality. We use the auto-regressive setup described in Section 2, where we iteratively sample frames following the sequences of actions defined by the ground-truth trajectory, while conditioning the model on its own past predictions. When sampled auto-regressively, the predicted and ground-truth trajectories often diverge after a few steps, mostly due to the accumulation of small amounts of different movement velocities between frames in each trajectory. For that reason, per-frame PSNR and LPIPS values gradually decrease and increase respectively, as can be seen in Figure 6. The predicted trajectory is still similar to the actual game in terms of content and image quality, but per-frame metrics are limited in their ability to capture this (see Appendix A.1 for samples of auto-regressively generated trajectories).

We therefore measure the FVD (Unterthiner et al., 2019) computed over a random holdout of 512 trajectories, measuring the distance between the predicted and ground truth trajectory distributions, for simulations of length 16 frames (0.8 seconds) and 32 frames (1.6 seconds). For 16 frames, our model obtains an FVD of . For 32 frames, our model obtains an FVD of .

Human Evaluation. As another measurement of simulation quality, we provided 10 human raters with 130 random short clips (of lengths 1.6 seconds and 3.2 seconds) of our simulation side by side with the real game. The raters were tasked with recognizing the real game (see Figure 14 in Appendix A.6). The raters only choose the actual game over the simulation in 58% or 60% of the time (for the 1.6 seconds and 3.2 seconds clips, respectively).

5.2 Ablations

To evaluate the importance of the different components of our methods, we sample trajectories from the evaluation dataset and compute LPIPS and PSNR metrics between the ground truth and the predicted frames.

5.2.1 Context Length

We evaluate the impact of changing the number of past observations in the conditioning context by training models with (recall that our method uses ). This affects both the number of historical frames and actions. We train the models for 200,000 steps keeping the decoder frozen and evaluate on test-set trajectories from 5 levels. See the results in Table 1. As expected, we observe that generation quality improves with the length of the context. Interestingly, we observe that while the improvement is large at first (e.g., between 1 and 2 frames), we quickly approach an asymptote and further increasing the context size provides only small improvements in quality. This is somewhat surprising as even with our maximal context length, the model only has access to a little over 3 seconds of history. Notably, we observe that much of the game state is persisted for much longer periods (see Section 7). While the length of the conditioning context is an important limitation, Table 1 hints that we’d likely need to change the architecture of our model to efficiently support longer contexts, and employ better selection of the past frames to condition on, which we leave for future work.

Table 1: Number of history frames. We ablate the number of history frames used as context using 8912 test-set examples from 5 levels. More frames generally improve both PSNR and LPIPS metrics.

| History Context Length | PSNR | LPIPS |

|---|---|---|

| 64 | ||

| 32 | ||

| 16 | ||

| 8 | ||

| 4 | ||

| 2 | ||

| 1 |

5.2.2 Noise Augmentation

To ablate the impact of noise augmentation, we train a model without added noise. We evaluate both our standard model with noise augmentation and the model without added noise (after 200k training steps) auto-regressively and compute PSNR and LPIPS metrics between the predicted frames and the ground-truth over a random holdout of 512 trajectories. We report average metric values for each auto-regressive step up to a total of 64 frames in Figure 7.

Without noise augmentation, LPIPS distance from the ground truth increases rapidly compared to our standard noise-augmented model, while PSNR drops, indicating a divergence of the simulation from ground truth.

5.2.3 Agent Play

We compare training on agent-generated data to training on data generated using a random policy. For the random policy, we sample actions following a uniform categorical distribution that doesn’t depend on the observations. We compare the random and agent datasets by training 2 models for 700k steps along with their decoder. The models are evaluated on a dataset of 2048 human-play trajectories from 5 levels. We compare the first frame of generation, conditioned on a history context of 64 ground-truth frames, as well as a frame after 3 seconds of auto-regressive generation.

Overall, we observe that training the model on random trajectories works surprisingly well, but is limited by the exploration ability of the random policy. When comparing the single frame generation, the agent works only slightly better, achieving a PSNR of 25.06 vs 24.42 for the random policy. When comparing a frame after 3 seconds of auto-regressive generation, the difference increases to 19.02 vs 16.84. When playing with the model manually, we observe that some areas are very easy for both, some areas are very hard for both, and in some, the agent performs much better. With that, we manually split 456 examples into 3 buckets: easy, medium, and hard, manually, based on their distance from the starting position in the game. We observe that on the easy and hard sets, the agent performs only slightly better than random, while on the medium set, the difference is much larger in favor of the agent as expected (see Table 2). See Figure 13 in Appendix A.5 for an example of the scores during a single session of human play.

Table 2: Performance on Different Difficulty Levels. We compare the performance of models trained using Agent-generated and Random-generated data across easy, medium, and hard splits of the dataset. Easy and medium have 112 items, hard has 232 items. Metrics are computed for each trajectory on a single frame after 3 seconds.

| Difficulty Level | Data Generation Policy | PSNR | LPIPS |

|---|---|---|---|

| Easy | Agent | ||

| Random | |||

| Medium | Agent | ||

| Random | |||

| Hard | Agent | ||

| Random |

6 Related Work

Interactive 3D Simulation

Simulating visual and physical processes of 2D and 3D environments and allowing interactive exploration of them is an extensively developed field in computer graphics (Akenine-Möller et al., 2018). Game Engines, such as Unreal and Unity, are software that processes representations of scene geometry and renders a stream of images in response to user interactions. The game engine is responsible for keeping track of all world state, e.g., the player position and movement, objects, character animation, and lighting. It also tracks the game logic, e.g., points gained by accomplishing game objectives. Film and television productions use variants of ray-tracing (Shirley & Morley, 2008), which are too slow and compute-intensive for real-time applications. In contrast, game engines must keep a very high frame rate (typically 30-60 FPS), and therefore rely on highly-optimized polygon rasterization, often accelerated by GPUs. Physical effects such as shadows, particles, and lighting are often implemented using efficient heuristics rather than physically accurate simulation.

Neural 3D Simulation

Neural methods for reconstructing 3D representations have made significant advances over the last years. NeRFs (Mildenhall et al., 2020) parameterize radiance fields using a deep neural network that is specifically optimized for a given scene from a set of images taken from various camera poses. Once trained, novel points of view of the scene can be sampled using volume rendering methods. Gaussian Splatting (Kerbl et al., 2023) approaches build on NeRFs but represent scenes using 3D Gaussians and adapted rasterization methods, unlocking faster training and rendering times. While demonstrating impressive reconstruction results and real-time interactivity, these methods are often limited to static scenes.

Video Diffusion Models

Diffusion models achieved state-of-the-art results in text-to-image generation (Saharia et al., 2022; Rombach et al., 2022; Ramesh et al., 2022; Podell et al., 2023), a line of work that has also been applied for text-to-video generation tasks (Ho et al., 2022; Blattmann et al., 2023b; a; Gupta et al., 2023; Girdhar et al., 2023; Bar-Tal et al., 2024). Despite impressive advancements in realism, text adherence, and temporal consistency, video diffusion models remain too slow for real-time applications. Our work extends this line of work and adapts it for real-time generation conditioned autoregressively on a history of past observations and actions.

Game Simulation and World Models

Several works attempted to train models for game simulation with actions inputs. Yang et al. (2023) build a diverse dataset of real-world and simulated videos and train a diffusion model to predict a continuation video given a previous video segment and a textual description of an action. Menapace et al. (2021) and Bruce et al. (2024) focus on unsupervised learning of actions from videos. Menapace et al. (2024) converts textual prompts to game states, which are later converted to a 3D representation using NeRF. Unlike these works, we focus on interactive playable real-time simulation, and demonstrate robustness over long-horizon trajectories. We leverage an RL agent to explore the game environment and create rollouts of observations and interactions for training our interactive game model. Another line of work explored learning a predictive model of the environment and using it for training an RL agent. Ha & Schmidhuber (2018) train a Variational Auto-Encoder (Kingma & Welling, 2014) to encode game frames into a latent vector, and then use an RNN to mimic the VizDoom game environment, training on random rollouts from a random policy (i.e., selecting an action at random). Then a controller policy is learned by playing within the “hallucinated” environment. Hafner et al. (2020) demonstrate that an RL agent can be trained entirely on episodes generated by a learned world model in latent space. Also close to our work is Kim et al. (2020), which uses an LSTM architecture for modeling the world state, coupled with a convolutional decoder for producing output frames and jointly trained under an adversarial objective. While this approach seems to produce reasonable results for simple games like PacMan, it struggles with simulating the complex environment of VizDoom and produces blurry samples. In contrast, GameNGen is able to generate samples comparable to those of the original game; see Figure 2. Finally, concurrently with our work, Alonso et al. (2024) train a diffusion world model to predict the next observation given observation history, and iteratively train the world model and an RL model on Atari games.

DOOM

When DOOM was released in 1993, it revolutionized the gaming industry. Introducing groundbreaking 3D graphics technology, it became a cornerstone of the first-person shooter genre, influencing countless other games. DOOM was studied by numerous research works. It provides an open-source implementation and a native resolution that is low enough for small-sized models to simulate while being complex enough to be a challenging test case. Finally, the authors have spent countless youth hours with the game. It was a trivial choice to use it in this work.

7 Discussion

Summary. We introduced GameNGen and demonstrated that high-quality real-time gameplay at 20 frames per second is possible on a neural model. We also provided a recipe for converting an interactive piece of software such as a computer game into a neural model.

Limitations. GameNGen suffers from a limited amount of memory. The model only has access to a little over 3 seconds of history, so it’s remarkable that much of the game logic is persisted for drastically longer time horizons. While some of the game state is persisted through screen pixels (e.g., ammo and health tallies, available weapons, etc.), the model likely learns strong heuristics that allow meaningful generalizations. For example, from the rendered view, the model learns to infer the player’s location, and from the ammo and health tallies, the model might infer whether the player has already been through an area and defeated the enemies there. That said, it’s easy to create situations where this context length is not enough. Continuing to increase the context size with our existing architecture yields only marginal benefits (Section 5.2.1), and the model’s short context length remains an important limitation. The second important limitation is the remaining differences between the agent’s behavior and those of human players. For example, our agent, even at the end of training, still does not explore all of the game’s locations and interactions, leading to erroneous behavior in those cases.

Future Work. We demonstrate GameNGen on the classic game DOOM. It would be interesting to test it on other games or more generally on other interactive software systems. We note that nothing in our technique is DOOM specific except for the reward function for the RL-agent. We plan on addressing that in future work. While GameNGen manages to maintain game state accurately, it isn’t perfect, as per the discussion above. A more sophisticated architecture might be needed to mitigate these issues. GameNGen currently has a limited capability to leverage more than a minimal amount of memory. Experimenting with further expanding the memory effectively could be critical for more complex games/software. GameNGen runs at 20 or 50 FPS22Faster than the original game DOOM ran on some of the authors’ 80386 machines at the time! on a TPUv5. It would be interesting to experiment with further optimization techniques to get it to run at higher frame rates and on consumer hardware.

Towards a New Paradigm for Interactive Video Games. Today, video games are programmed by humans. GameNGen is a proof-of-concept for one part of a new paradigm where games are weights of a neural model, not lines of code. GameNGen shows that an architecture and model weights exist such that a neural model can effectively run a complex game (DOOM) interactively on existing hardware. While many important questions remain, we are hopeful that this paradigm could have important benefits. For example, the development process for video games under this new paradigm might be less costly and more accessible, whereby games could be developed and edited via textual descriptions or example images. A small part of this vision, namely creating modifications or novel behaviors for existing games, might be achievable in the shorter term. For example, we might be able to convert a set of frames into a new playable level or create a new character just based on example images, without having to author code. Other advantages of this new paradigm include strong guarantees on frame rates and memory footprints. We have not experimented with these directions yet and much more work is required here, but we are excited to try! Hopefully, this small step will someday contribute to a meaningful improvement in people’s experience with video games, or maybe even more generally, in day-to-day interactions with interactive software systems.

Acknowledgements

We’d like to extend a huge thank you to Eyal Segalis, Eyal Molad, Matan Kalman, Nataniel Ruiz, Amir Hertz, Matan Cohen, Yossi Matias, Yael Pritch, Danny Lumen, Valerie Nygaard, the Theta Labs and Google Research teams, and our families for insightful feedback, ideas, suggestions, and support.

Contribution

- Dani Valevski: Developed much of the codebase, tuned parameters and details across the system, added autoencoder fine-tuning, agent training, and distillation.

- Yaniv Leviathan: Proposed project, method, and architecture, developed the initial implementation, key contributor to implementation and writing.

- Moab Arar: Led auto-regressive stabilization with noise-augmentation, many of the ablations, and created the dataset of human-play data.

- Shlomi Fruchter: Proposed project, method, and architecture. Project leadership, initial implementation using DOOM, main manuscript writing, evaluation metrics, random policy data pipeline.

Correspondence to shlomif@google.com and leviathan@google.com.

References

- Akenine-Möller et al. (2018) Tomas Akenine-Möller, Eric Haines, and Naty Hoffman. Real-Time Rendering, Fourth Edition. A. K. Peters, Ltd., USA, 4th edition, 2018. ISBN 0134997832.

- Alonso et al. (2024) Eloi Alonso, Adam Jelley, Vincent Micheli, Anssi Kanervisto, Amos Storkey, Tim Pearce, and François Fleuret. Diffusion for world modeling: Visual details matter in Atari, 2024.

- Bar-Tal et al. (2024) Omer Bar-Tal, Hila Chefer, Omer Tov, Charles Herrmann, Roni Paiss, Shiran Zada, Ariel Ephrat, Junhwa Hur, Guanghui Liu, Amit Raj, Yuanzhen Li, Michael Rubinstein, Tomer Michaeli, Oliver Wang, Deqing Sun, Tali Dekel, and Inbar Mosseri. Lumiere: A space-time diffusion model for video generation, 2024. URL: [1](https://arxiv.org/abs/2401.12945).

- Blattmann et al. (2023a) Andreas Blattmann, Tim Dockhorn, Sumith Kulal, Daniel Mendelevitch, Maciej Kilian, Dominik Lorenz, Yam Levi, Zion English, Vikram Voleti, Adam Letts, Varun Jampani, and Robin Rombach. Stable video diffusion: Scaling latent video diffusion models to large datasets, 2023a. URL: [2](https://arxiv.org/abs/2311.15127).

- Blattmann et al. (2023b) Andreas Blattmann, Robin Rombach, Huan Ling, Tim Dockhorn, Seung Wook Kim, Sanja Fidler, and Karsten Kreis. Align your latents: High-resolution video synthesis with latent diffusion models, 2023b. URL: [3](https://arxiv.org/abs/2304.08818).

- Brooks et al. (2024) Tim Brooks, Bill Peebles, Connor Holmes, Will DePue, Yufei Guo, Li Jing, David Schnurr, Joe Taylor, Troy Luhman, Eric Luhman, Clarence Ng, Ricky Wang, and Aditya Ramesh. Video generation models as world simulators, 2024. URL: [4](https://openai.com/research/video-generation-models-as-world-simulators).

- Bruce et al. (2024) Jake Bruce, Michael Dennis, Ashley Edwards, Jack Parker-Holder, Yuge Shi, Edward Hughes, Matthew Lai, Aditi Mavalankar, Richie Steigerwald, Chris Apps, Yusuf Aytar, Sarah Bechtle, Feryal Behbahani, Stephanie Chan, Nicolas Heess, Lucy Gonzalez, Simon Osindero, Sherjil Ozair, Scott Reed, Jingwei Zhang, Konrad Zolna, Jeff Clune, Nando de Freitas, Satinder Singh, and Tim Rocktäschel. Genie: Generative interactive environments, 2024. URL: [5](https://arxiv.org/abs/2402.15391).

- Girdhar et al. (2023) Rohit Girdhar, Mannat Singh, Andrew Brown, Quentin Duval, Samaneh Azadi, Sai Saketh Rambhatla, Akbar Shah, Xi Yin, Devi Parikh, and Ishan Misra. Emu video: Factorizing text-to-video generation by explicit image conditioning, 2023. URL: [6](https://arxiv.org/abs/2311.10709).

- Gupta et al. (2023) Agrim Gupta, Lijun Yu, Kihyuk Sohn, Xiuye Gu, Meera Hahn, Li Fei-Fei, Irfan Essa, Lu Jiang, and José Lezama. Photorealistic video generation with diffusion models, 2023. URL: [7](https://arxiv.org/abs/2312.06662).

- Ha & Schmidhuber (2018) David Ha and Jürgen Schmidhuber. World models, 2018.

- Hafner et al. (2020) Danijar Hafner, Timothy Lillicrap, Jimmy Ba, and Mohammad Norouzi. Dream to control: Learning behaviors by latent imagination, 2020. URL: [8](https://arxiv.org/abs/1912.01603).

- Ho & Salimans (2022) Jonathan Ho and Tim Salimans. Classifier-free diffusion guidance, 2022. URL: [9](https://arxiv.org/abs/2207.12598).

- Ho et al. (2021) Jonathan Ho, Chitwan Saharia, William Chan, David J Fleet, Mohammad Norouzi, and Tim Salimans. Cascaded diffusion models for high fidelity image generation. arXiv preprint arXiv:2106.15282, 2021.

- Ho et al. (2022) Jonathan Ho, William Chan, Chitwan Saharia, Jay Whang, Ruiqi Gao, Alexey A. Gritsenko, Diederik P. Kingma, Ben Poole, Mohammad Norouzi, David J. Fleet, and Tim Salimans. Imagen video: High definition video generation with diffusion models. ArXiv, abs/2210.02303, 2022. URL: [10](https://api.semanticscholar.org/CorpusID:252715883).

- Kerbl et al. (2023) Bernhard Kerbl, Georgios Kopanas, Thomas Leimkühler, and George Drettakis. 3d gaussian splatting for real-time radiance field rendering. ACM Transactions on Graphics, 42(4), July 2023. URL: [11](https://repo-sam.inria.fr/fungraph/3d-gaussian-splatting/).

- Kim et al. (2020) Seung Wook Kim, Yuhao Zhou, Jonah Philion, Antonio Torralba, and Sanja Fidler. Learning to Simulate Dynamic Environments with GameGAN. In IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Jun. 2020.

- Kingma & Welling (2014) Diederik P. Kingma and Max Welling. Auto-Encoding Variational Bayes. In 2nd International Conference on Learning Representations, ICLR 2014, Banff, AB, Canada, April 14-16, 2014, Conference Track Proceedings, 2014.

- Menapace et al. (2021) Willi Menapace, Stéphane Lathuilière, Sergey Tulyakov, Aliaksandr Siarohin, and Elisa Ricci. Playable video generation, 2021. URL: [12](https://arxiv.org/abs/2101.12195).

- Menapace et al. (2024) Willi Menapace, Aliaksandr Siarohin, Stéphane Lathuilière, Panos Achlioptas, Vladislav Golyanik, Sergey Tulyakov, and Elisa Ricci. Promptable game models: Text-guided game simulation via masked diffusion models. ACM Transactions on Graphics, 43(2):1–16, January 2024. doi: [10.1145/3635705](http://dx.doi.org/10.1145/3635705).

- Mildenhall et al. (2020) Ben Mildenhall, Pratul P. Srinivasan, Matthew Tancik, Jonathan T. Barron, Ravi Ramamoorthi, and Ren Ng. Nerf: Representing scenes as neural radiance fields for view synthesis. In ECCV, 2020.

- Mnih et al. (2015) Volodymyr Mnih, Koray Kavukcuoglu, David Silver, Andrei A. Rusu, Joel Veness, Marc G. Bellemare, Alex Graves, Martin A. Riedmiller, Andreas Kirkeby Fidjeland, Georg Ostrovski, Stig Petersen, Charlie Beattie, Amir Sadik, Ioannis Antonoglou, Helen King, Dharshan Kumaran, Daan Wierstra, Shane Legg, and Demis Hassabis. Human-level control through deep reinforcement learning. Nature, 518:529–533, 2015. URL: [13](https://api.semanticscholar.org/CorpusID:205242740).

- Petric & Milinkovic (2018) Danko Petric and Marija Milinkovic. Comparison between cs and jpeg in terms of image compression, 2018. URL: [14](https://arxiv.org/abs/1802.05114).

- Podell et al. (2023) Dustin Podell, Zion English, Kyle Lacey, Andreas Blattmann, Tim Dockhorn, Jonas Müller, Joe Penna, and Robin Rombach. Sdxl: Improving latent diffusion models for high-resolution image synthesis. arXiv preprint arXiv:2307.01952, 2023.

- Raffin et al. (2021) Antonin Raffin, Ashley Hill, Adam Gleave, Anssi Kanervisto, Maximilian Ernestus, and Noah Dormann. Stable-baselines3: Reliable reinforcement learning implementations. Journal of Machine Learning Research, 22(268):1–8, 2021. URL: [15](http://jmlr.org/papers/v22/20-1364.html).

- Ramesh et al. (2022) Aditya Ramesh, Prafulla Dhariwal, Alex Nichol, Casey Chu, and Mark Chen. Hierarchical text-conditional image generation with clip latents. arXiv preprint arXiv:2204.06125, 2022.

- Rombach et al. (2022) Robin Rombach, Andreas Blattmann, Dominik Lorenz, Patrick Esser, and Björn Ommer. High-resolution image synthesis with latent diffusion models. In Proceedings of the IEEE/CVF conference on computer vision and pattern recognition, pp. 10684–10695, 2022.

- Saharia et al. (2022) Chitwan Saharia, William Chan, Saurabh Saxena, Lala Li, Jay Whang, Emily L Denton, Kamyar Ghasemipour, Raphael Gontijo Lopes, Burcu Karagol Ayan, Tim Salimans, et al. Photorealistic text-to-image diffusion models with deep language understanding. Advances in Neural Information Processing Systems, 35:36479–36494, 2022.

- Salimans & Ho (2022a) Tim Salimans and Jonathan Ho. Progressive distillation for fast sampling of diffusion models. In The Tenth International Conference on Learning Representations, ICLR 2022, Virtual Event, April 25-29, 2022. OpenReview.net, 2022a. URL: [16](https://openreview.net/forum?id=TIdIXIpzhoI).

- Salimans & Ho (2022b) Tim Salimans and Jonathan Ho. Progressive distillation for fast sampling of diffusion models, 2022b. URL: [17](https://arxiv.org/abs/2202.00512).

- Schulman et al. (2017) John Schulman, Filip Wolski, Prafulla Dhariwal, Alec Radford, and Oleg Klimov. Proximal policy optimization algorithms. CoRR, abs/1707.06347, 2017. URL: [18](http://arxiv.org/abs/1707.06347).

- Shazeer & Stern (2018) Noam Shazeer and Mitchell Stern. Adafactor: Adaptive learning rates with sublinear memory cost. CoRR, abs/1804.04235, 2018. URL: [19](http://arxiv.org/abs/1804.04235).

- Shirley & Morley (2008) P. Shirley and R.K. Morley. Realistic Ray Tracing, Second Edition. Taylor & Francis, 2008. ISBN 9781568814612. URL: [20](https://books.google.ch/books?id=knpN6mnhJ8QC).

- Song et al. (2020) Jiaming Song, Chenlin Meng, and Stefano Ermon. Denoising diffusion implicit models. arXiv:2010.02502, October 2020. URL: [21](https://arxiv.org/abs/2010.02502).

- Song et al. (2022) Jiaming Song, Chenlin Meng, and Stefano Ermon. Denoising diffusion implicit models, 2022. URL: [22](https://arxiv.org/abs/2010.02502).

- Unterthiner et al. (2019) Thomas Unterthiner, Sjoerd van Steenkiste, Karol Kurach, Raphaël Marinier, Marcin Michalski, and Sylvain Gelly. FVD: A new metric for video generation. In Deep Generative Models for Highly Structured Data, ICLR 2019 Workshop, New Orleans, Louisiana, United States, May 6, 2019, 2019.

- Wang et al. (2023) Zhengyi Wang, Cheng Lu, Yikai Wang, Fan Bao, Chongxuan Li, Hang Su, and Jun Zhu. Prolificdreamer: High-fidelity and diverse text-to-3d generation with variational score distillation. arXiv preprint arXiv:2305.16213, 2023.

- Wydmuch et al. (2019) Marek Wydmuch, Michał Kempka, and Wojciech Jaśkowski. ViZDoom Competitions: Playing Doom from Pixels. IEEE Transactions on Games, 11(3):248–259, 2019. doi: [10.1109/TG.2018.2877047](http://dx.doi.org/10.1109/TG.2018.2877047).

- Yang et al. (2023) Mengjiao Yang, Yilun Du, Kamyar Ghasemipour, Jonathan Tompson, Dale Schuurmans, and Pieter Abbeel. Learning interactive real-world simulators. arXiv preprint arXiv:2310.06114, 2023.

- Yin et al. (2024) Tianwei Yin, Michaël Gharbi, Richard Zhang, Eli Shechtman, Frédo Durand, William T Freeman, and Taesung Park. One-step diffusion with distribution matching distillation. In CVPR, 2024.

- Zhang et al. (2018) Richard Zhang, Phillip Isola, Alexei A. Efros, Eli Shechtman, and Oliver Wang. The unreasonable effectiveness of deep features as a perceptual metric. In CVPR, 2018.

Appendix A Appendix

A.1 Samples

Figs. [8](https://arxiv.org/html/2408.14837v1#A1.F8), [9](https://arxiv.org/html/2408.14837v1#A1.F9), [10](https://arxiv.org/html/2408.14837v1#A1.F10), [11](https://arxiv.org/html/2408.14837v1#A1.F11) provide selected samples from GameNGen.